Apple introduced the Magnifying tool to the iPhone in 2018 with iOS 12. In its initial days, the tool allowed iPhone users to zoom in on objects in their surroundings. However, the feature has gained a slew of new features.

The ability to adjust the image’s brightness and contrast, freeze frames to review them, and more have been added over the past few years.

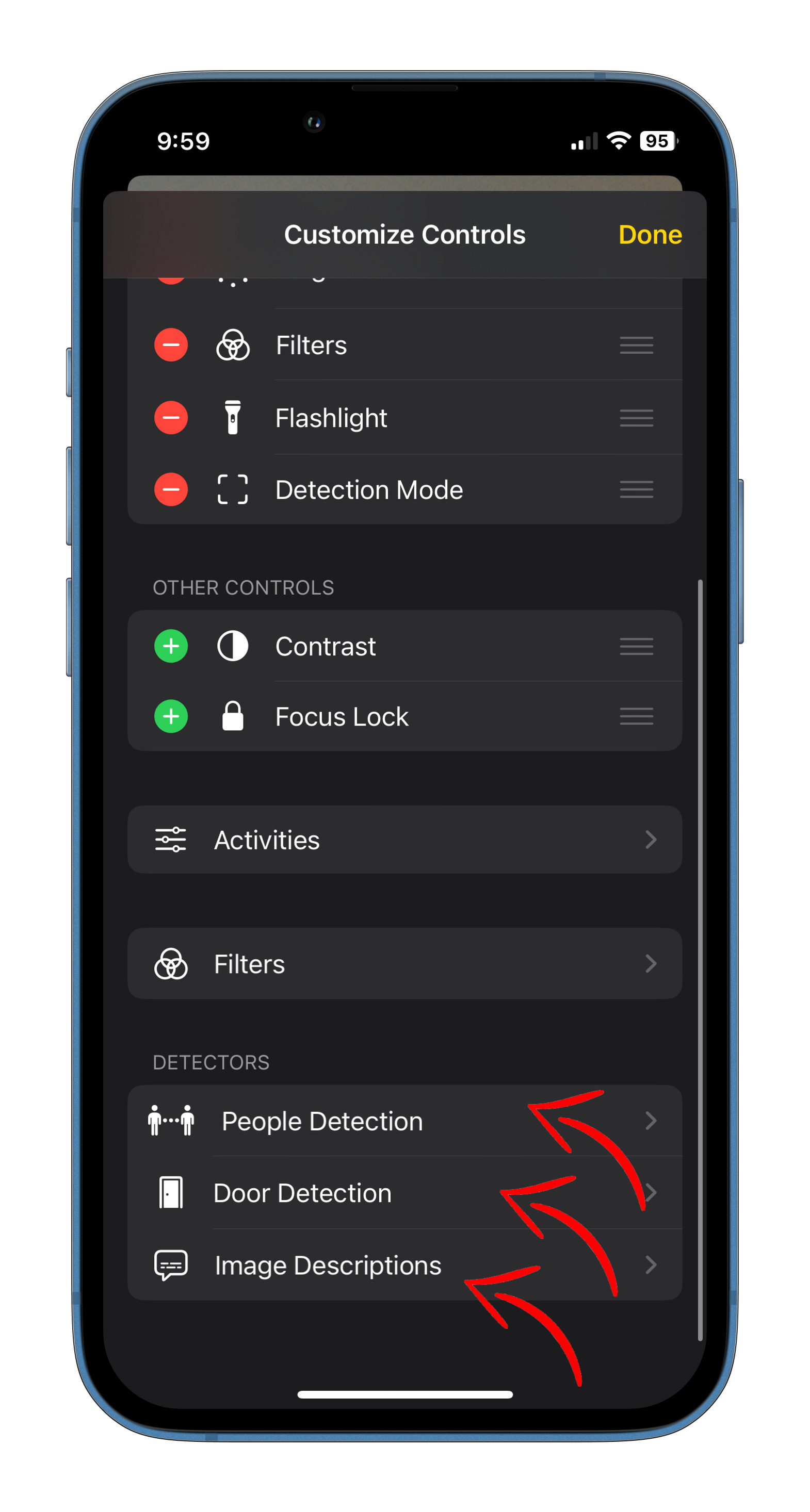

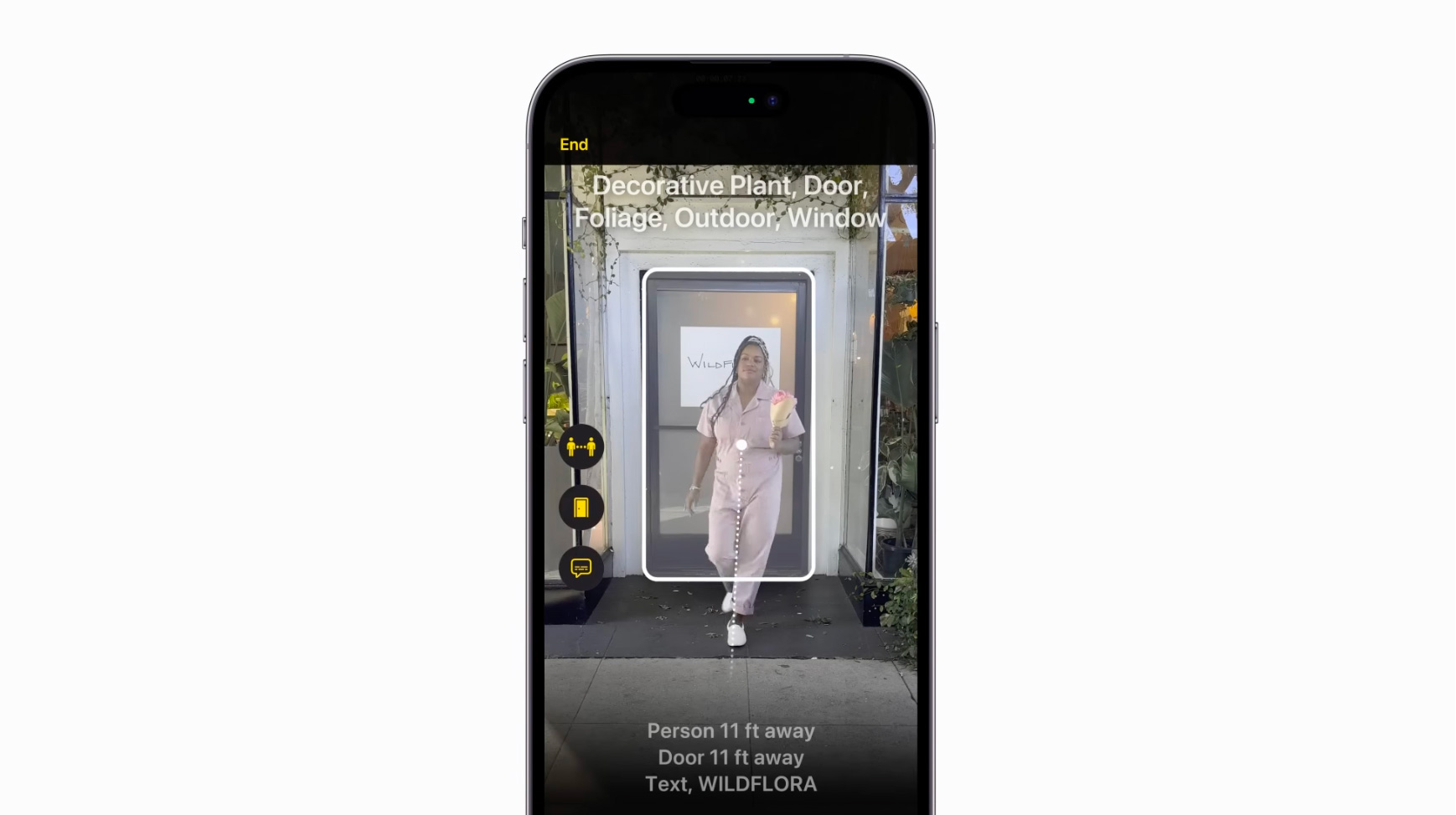

Now, using the same tool, you can use your iPhone with LiDAR to help you identify when people, doors and objects are near you while you move around. The sub-tool is called ‘Detection Mode,’ and it allows users to use features like ‘People Detection,’ ‘Door Detection,’ and ‘Image Descriptions’ directly from within the Magnifier to get descriptions of your surroundings, including text and symbols around a door, how far away you’re standing, and even how to open it.

The tool is part of Apple’s Vision accessibility features. It can be used by anyone, though it’s especially useful for those that are visually impaired (by using Siri and VoiceOver to navigate to the feature and through voice prompts about the surroundings).

Before proceeding, it’s important to note that since the feature makes use of LiDAR, it’s only available on iPhone 12 and later devices.

To enable the feature, you’ll have to locate ‘Magnifier.’ Swipe down on your phone to pull up the search screen and type in ‘Magnifier.’ Click on the gear icon on the left, as seen in the screenshot below, and tap on ‘Settings.’

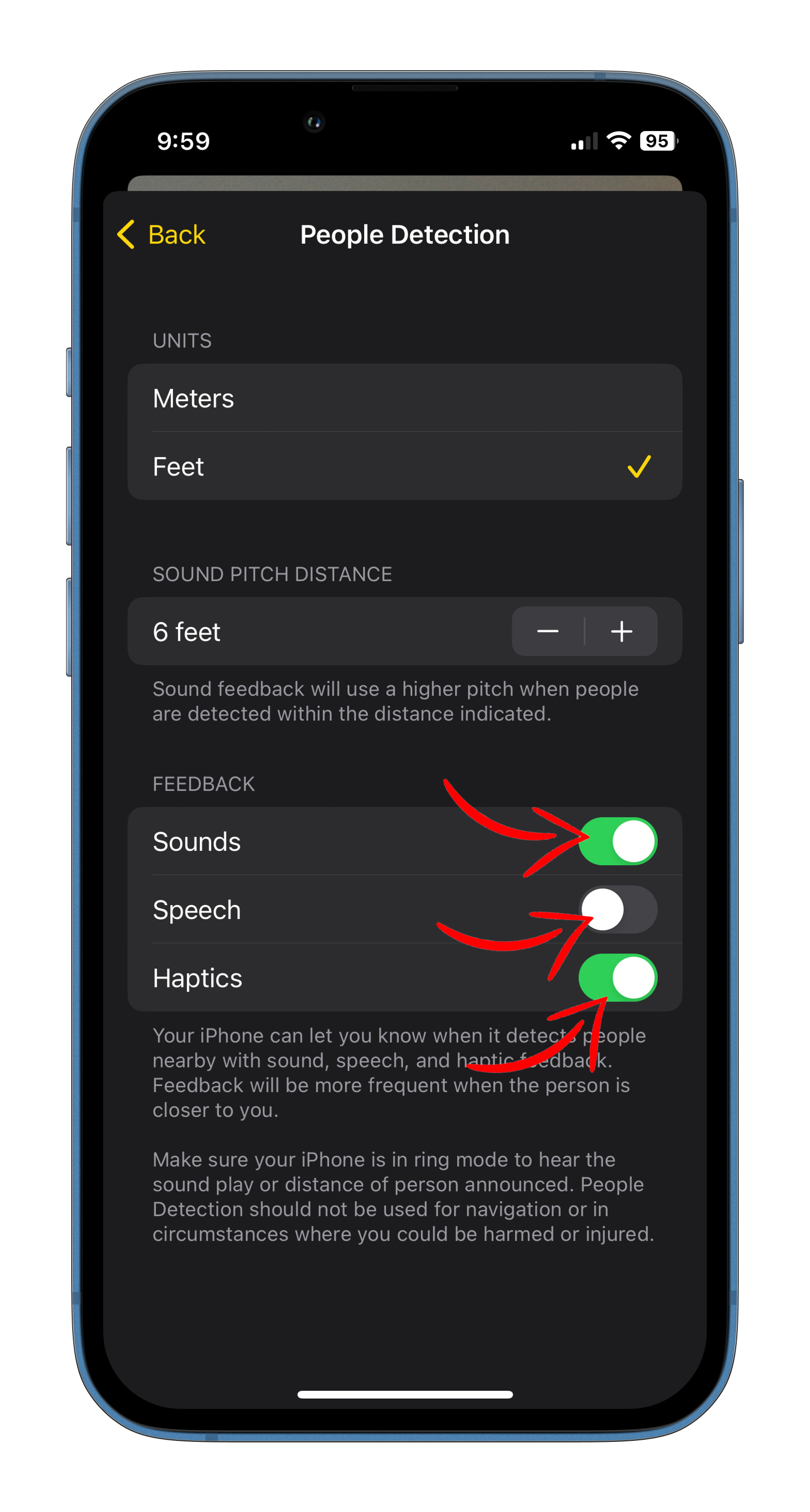

From here, enable ‘Detection Mode,’ and it will appear as a tool whenever you open the Magnifier tool. You can subsequently customize settings to deliver feedback regarding your surroundings via sound, speech and haptics.

“Your iPhone can let you know when it detects people (and doors) early with sound, speech, and haptic feedback,” read the setting page. “Feedback will be more frequent when the person (or door) is closer to you.”

Now, whenever you have the Detection Mode open, and you’re pointing your phone around, your device will notify you with visual, sound, speed and haptic feedback (depending on if you enabled the specific feedback method) regarding how far away you are from an object/person/door. Additionally, if a door that you’re pointing your device at has any signs or labels, it will be displayed on-screen and read out loud, alongside instructions on how to open the door and which side it’ll open in.

MobileSyrup may earn a commission from purchases made via our links, which helps fund the journalism we provide free on our website. These links do not influence our editorial content. Support us here.