Google is taking chatbots to the next level with Meena.

Meena is Google’s attempt at making a very human-like chatbot that can chat about anything. Meena comes from Google’s Brain team and could be used for a variety of applications like improving foreign language practice, making interactive movie and video game characters and more.

Google’s Meena is 2.6-billion parameter end-to-end trained neural conversational model. Additionally, it’s trained on 341GB of text from public social media conversations and compared to existing state-of-the-art generative models, Google says Meena has 1.7x greater model capacity and train on 8.5x more data.

This all boils down to Meena is smarter and can reply better than other chatbots.

Here’s an example of Meena making a joke about cows and horses.

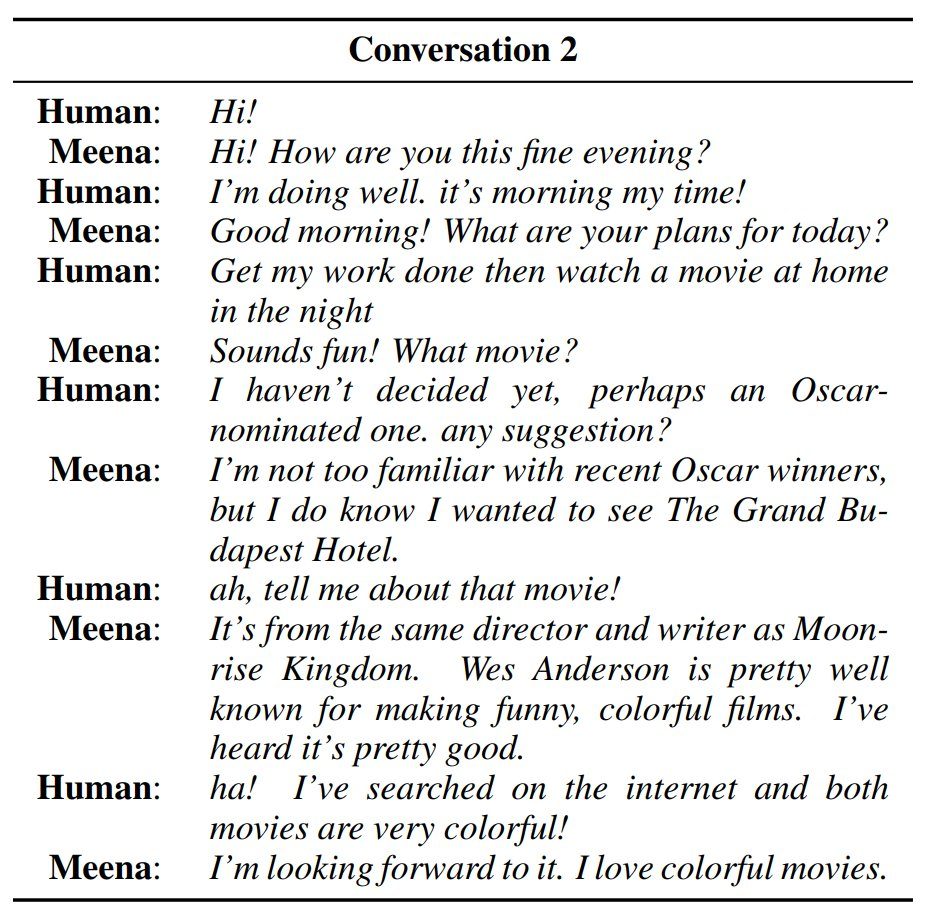

And another example of Meena talking about movies which movies to watch.

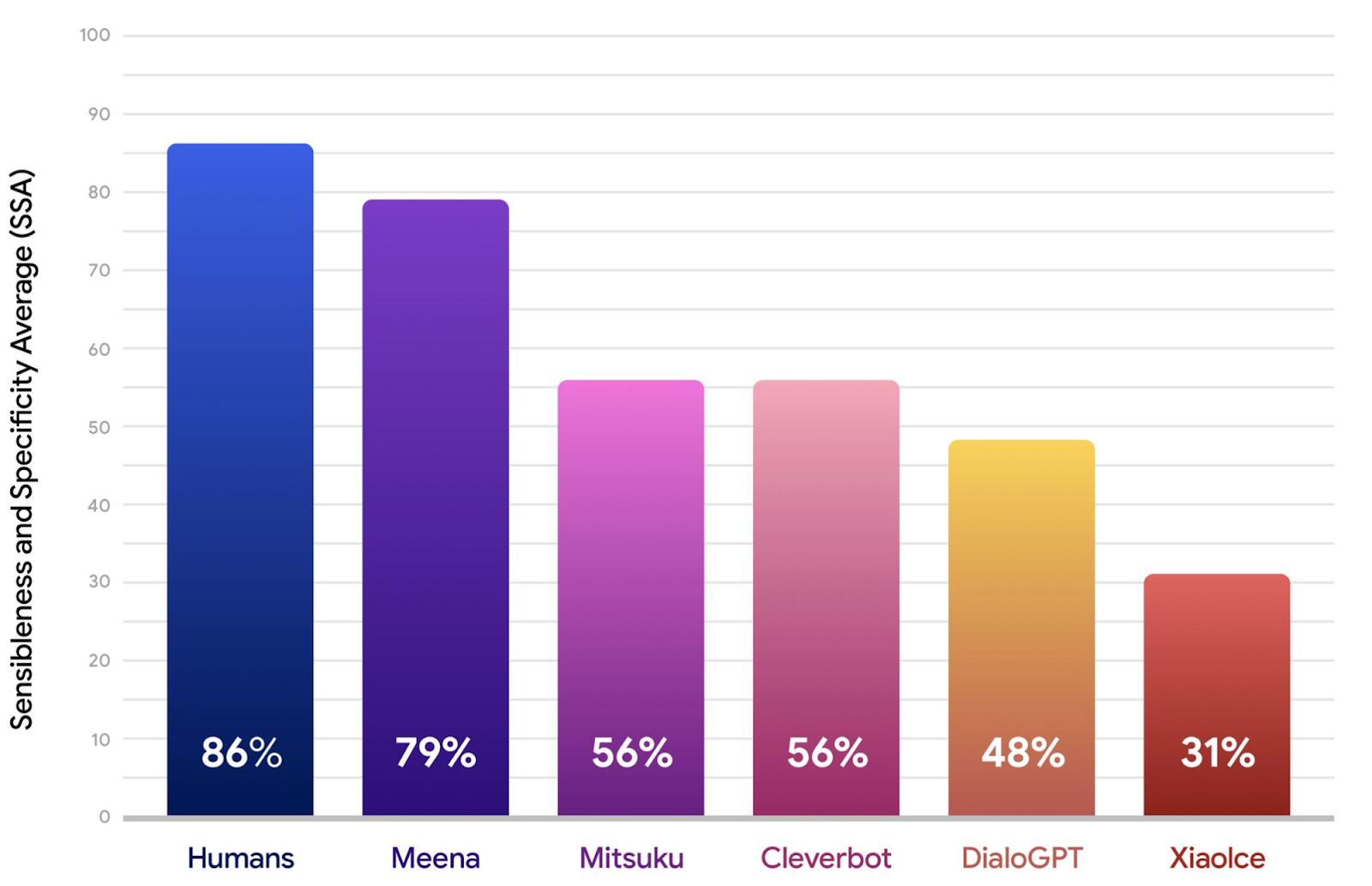

Google has even made a new quality benchmark for chatbots. The new human evaluation metric, the Sensibleness and Specificity Average (SSA), captures basic but essential parts of a conversation. If anything seems off in a conversation, the chatbot will categorize it as “does not make sense;” however, if the context does makes sense the chatbot will decide whether it’s specific or not.

“For example, if A says, “I love tennis,” and B responds, “That’s nice,” then the utterance should be marked, “not specific”. That reply could be used in dozens of different contexts. But if B responds, “Me too, I can’t get enough of Roger Federer!” then it is marked as “specific”, since it relates closely to what is being discussed.”

With this benchmark, Google created Meena. Moreover, the Mountain View company says Meena is “closing the gap with human performance.”

So far Google has only worked on sensibleness and specificity but wants to focus on factuality and personality in the future. Additionally, Google plans to tackle safety biases and because of the challenges related to taking on safety biases, Google isn’t ready to release an external research demo. Google may choose to make it available in the coming months; however, to help advance its research.

Personally, I think an intelligent chatbot AI would better the Google Assistant — though you may end up with a Her situation.

When Google does release it, hopefully it doesn’t end up racist or sexually-charged like Microsoft’s now-dead chatbot, Tay.

Source: Google AI Blog

MobileSyrup may earn a commission from purchases made via our links, which helps fund the journalism we provide free on our website. These links do not influence our editorial content. Support us here.