Popular dating app Bumble has launched an artificial intelligence tool that will detect and notify users when they’ve been sent a picture of a penis and other explicit images.

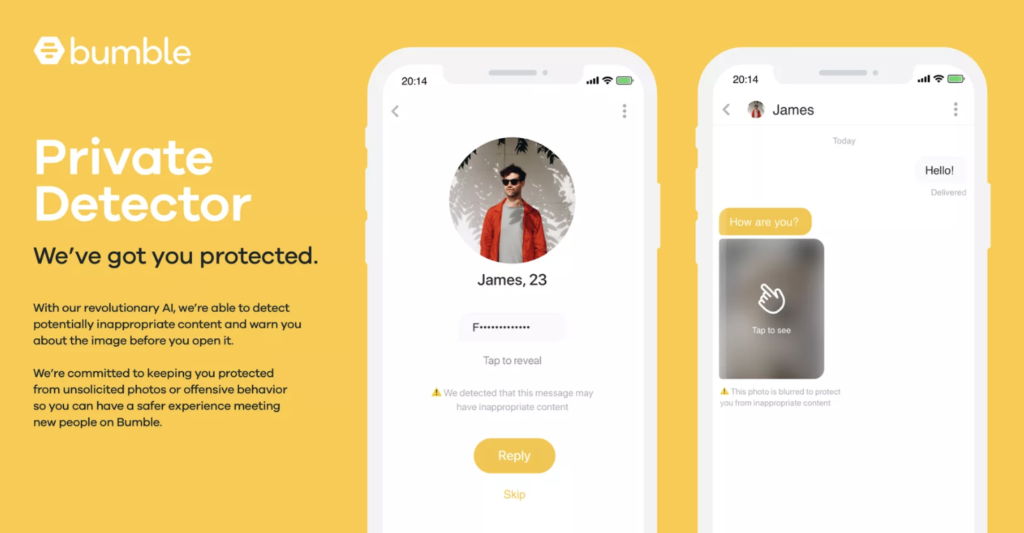

The feature, called “Private Detector,” will automatically blur any inappropriate images or content that is shared within a chat and will notify users that they’ve received an explicit image. The user will then have the option to open the image, block it, and even report it to Bumble moderators.

“We’re committed to keeping you protected from unsolicited photos or offensive behavior so you can have a safer experience meeting new people on Bumble,” the app’s website reads.

The Verge also reported that Bumble’s co-founder Whitney Wolfe Herd has also been working to pass a bill that would “make the sharing of unwanted nude images a crime, with a punishable fine of up to $500.”

Source: The Verge

MobileSyrup may earn a commission from purchases made via our links, which helps fund the journalism we provide free on our website. These links do not influence our editorial content. Support us here.