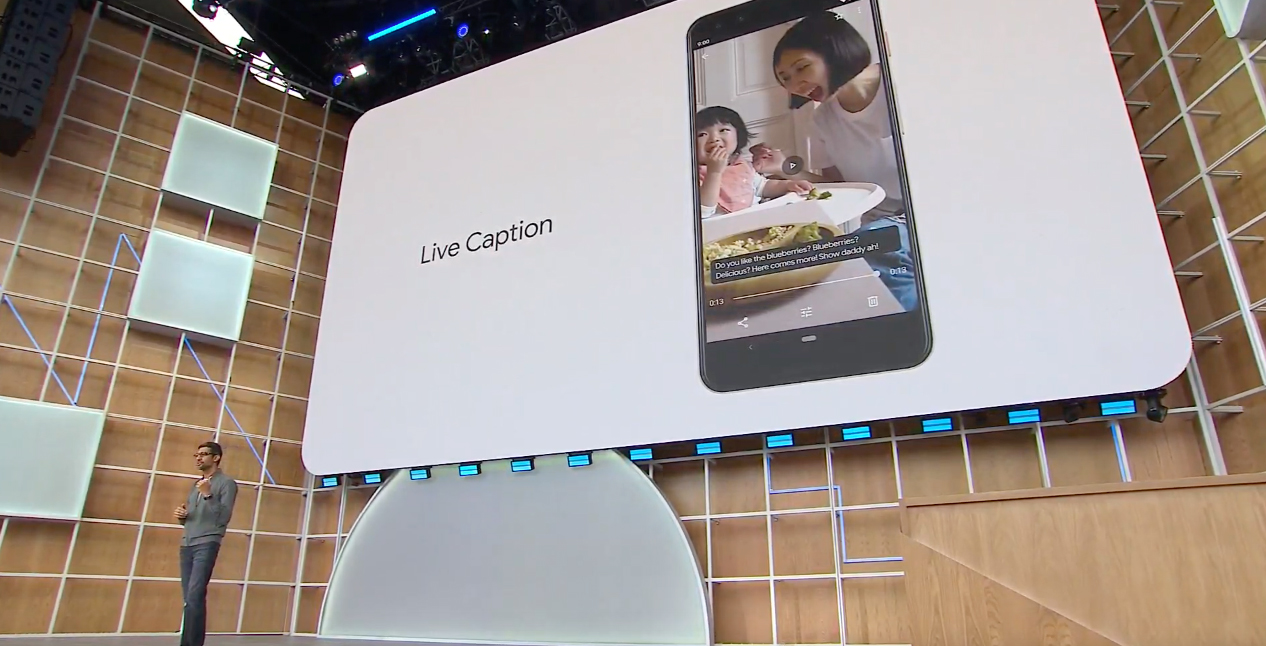

Google has revealed one of the most significant features to be included in Android Q — Live Caption.

During its I/O 2019 keynote, the company showed off how Live Caption automatically transcribes video and audio in real-time.

Live Captions can be used in any app on an Android device, whether its Google-owned platforms like YouTube and Google Duo or third-party apps like Instagram and Pocket Casts.

Notably, Live Captions use on-device machine learning, which means they can work offline without needing to be connected to Google’s cloud servers. In practice, the transcription will appear in a black box that users can move around the screen to whichever position they prefer.

If it has audio, now it can have captions. Live Caption automatically captions media playing on your phone. Videos, podcasts and audio messages, across any app—even stuff you record yourself. #io19 pic.twitter.com/XAW3Ii4xxy

— Google (@Google) May 7, 2019

The feature will even work when volume is reduced or muted, since it analyzes the source audio rather than what is being outputted from the device. Note that transcriptions cannot be saved for later viewing though, as they disappear once the content is done playing.

Google noted that the feature is primarily intended to help the 466 million deaf and hard of hearing people around the world. To develop Live Caption, Google says it “worked closely” with the deaf community.

“Building for everyone means ensuring that everyone can access our products,” said Google CEO Sundar Pichai during the keynote. “We believe technology can help us be more inclusive, and AI is providing us with new tools to dramatically improve the experience for people with disabilities.”

MobileSyrup may earn a commission from purchases made via our links, which helps fund the journalism we provide free on our website. These links do not influence our editorial content. Support us here.