Facebook is getting better at finding and deleting hate speech, violence and graphic violence, but it still has a ways to go in a number of other categories.

The social network published the third edition of its community standards and enforcement report today, so if you want to deep dive into the stats, you can view it here.

On a press call, Facebook CEO Mark Zuckerberg took a moment at the beginning of the call to talk about the companies goals for the report going forward. He also stressed the report’s importance.

He mentioned that next year, the social media giant is going to publish these reports quarterly since he sees them as just as necessary as the company’s financial information.

Additionally, the next release will include Instagram, the other massive social network owned by Facebook.

Zuckerberg stated that the company has a responsibility to prevent harm and keep its users safe.

The stats

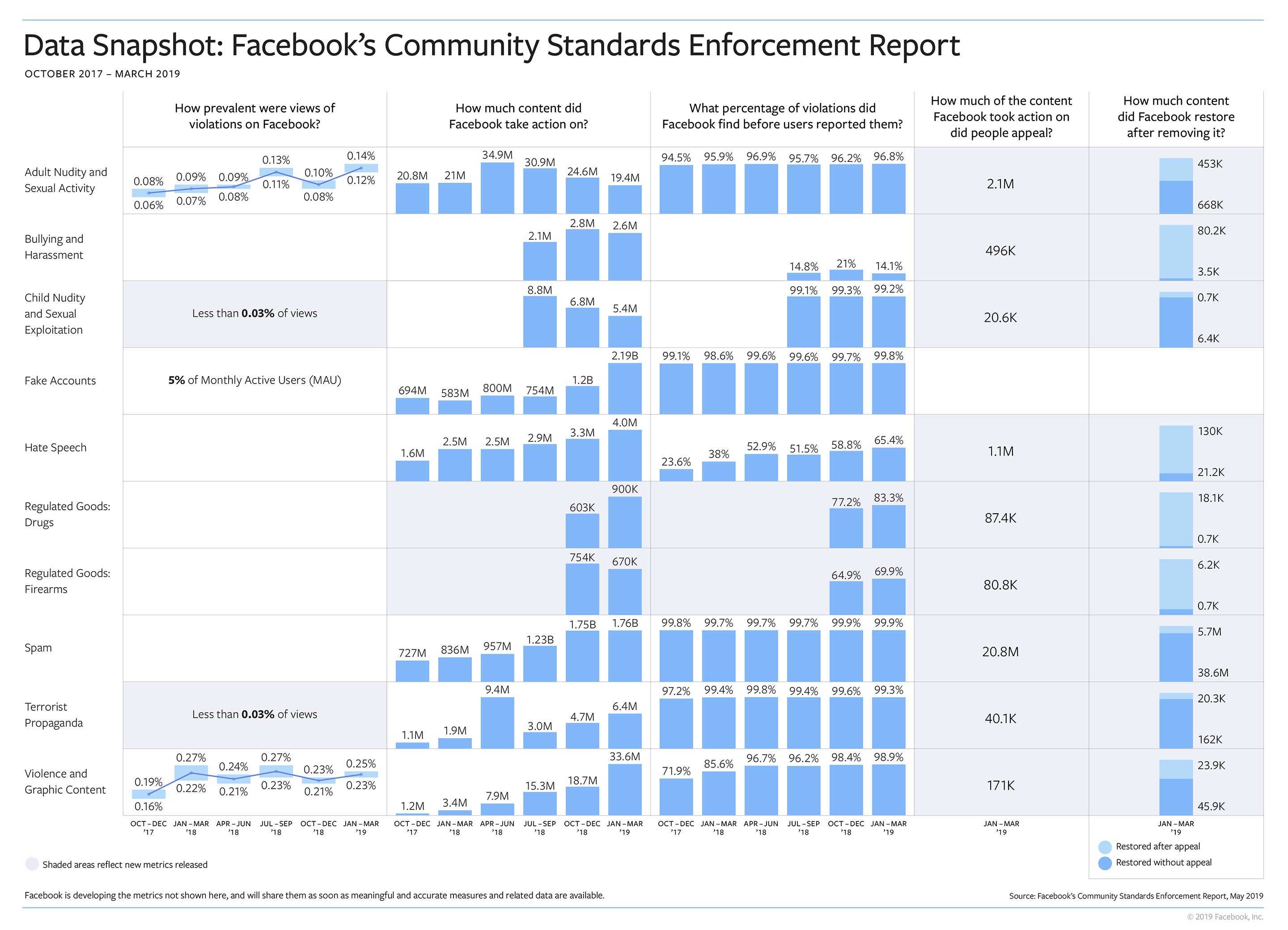

First off, the company is making progress in five areas – hate speech, fake accounts, drugs, spam and violent or graphic content.

Some interesting stats about these areas that Facebook shared say that out of 10,000 posts, 11 to 14 posts contain adult nudity and sexual activity. On top of this, 25 posts out of 10,000 feature graphic violence according to the companies data. Finally, about 5 percent of active monthly accounts are fake.

The company found less bullying, child nudity, firearm sales and terrorist propaganda compared to the two previous reports, but in some cases, it isn’t by much.

The company’s artificial intelligence programs discovered a lot of rule-breaking posts in these areas and took them down quickly before real users report them. Specifically, six policy areas have a 95 percent AI takedown rate.

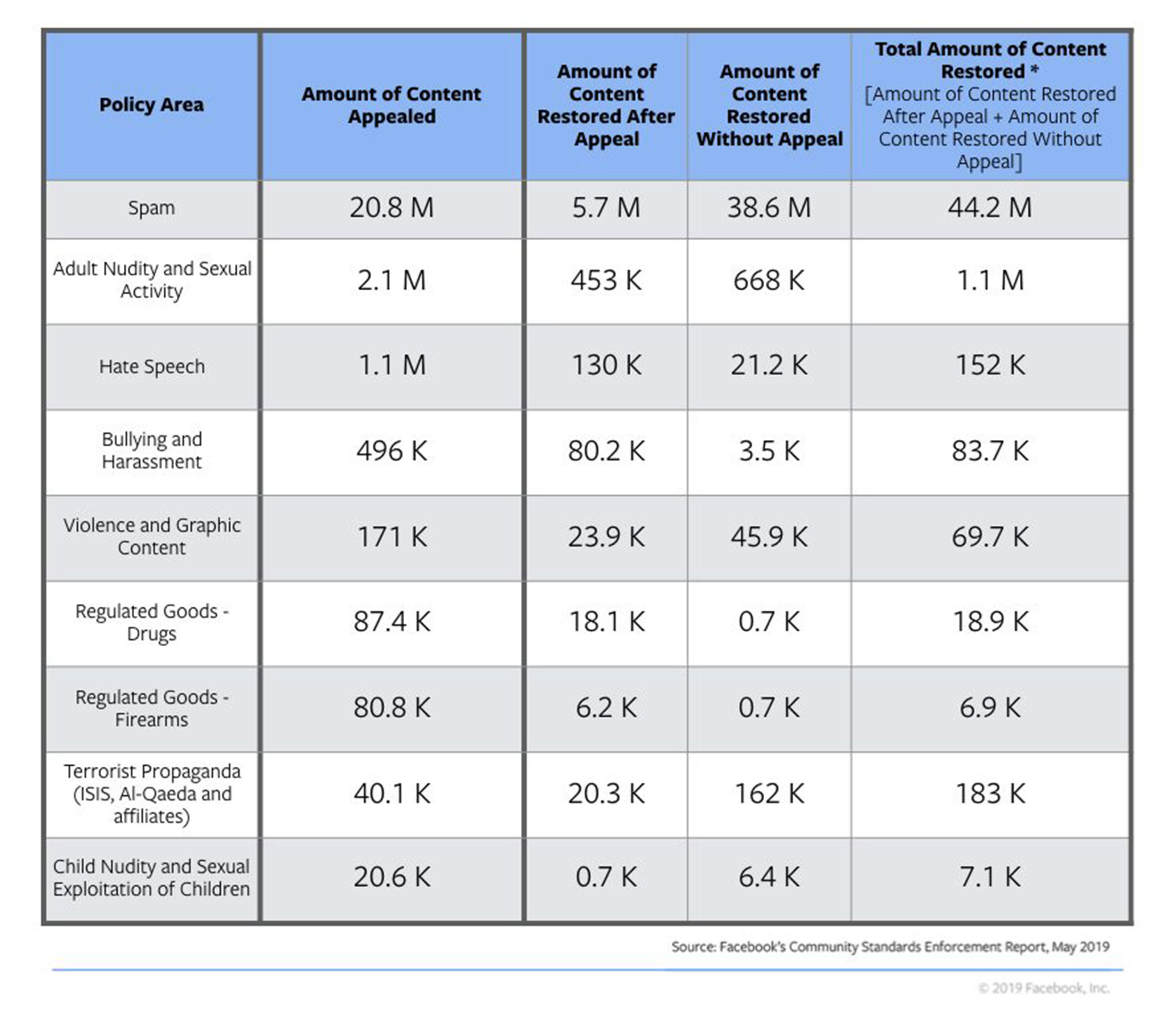

One of the most interesting things that Facebook shared in this report is how many appeals users have made over taken-down content. For example, 1.1 million posts that Facebook removed from its platform for hate speech were appealed and then 130,000 posts were restored after the appeal.

Source: Facebook

MobileSyrup may earn a commission from purchases made via our links, which helps fund the journalism we provide free on our website. These links do not influence our editorial content. Support us here.