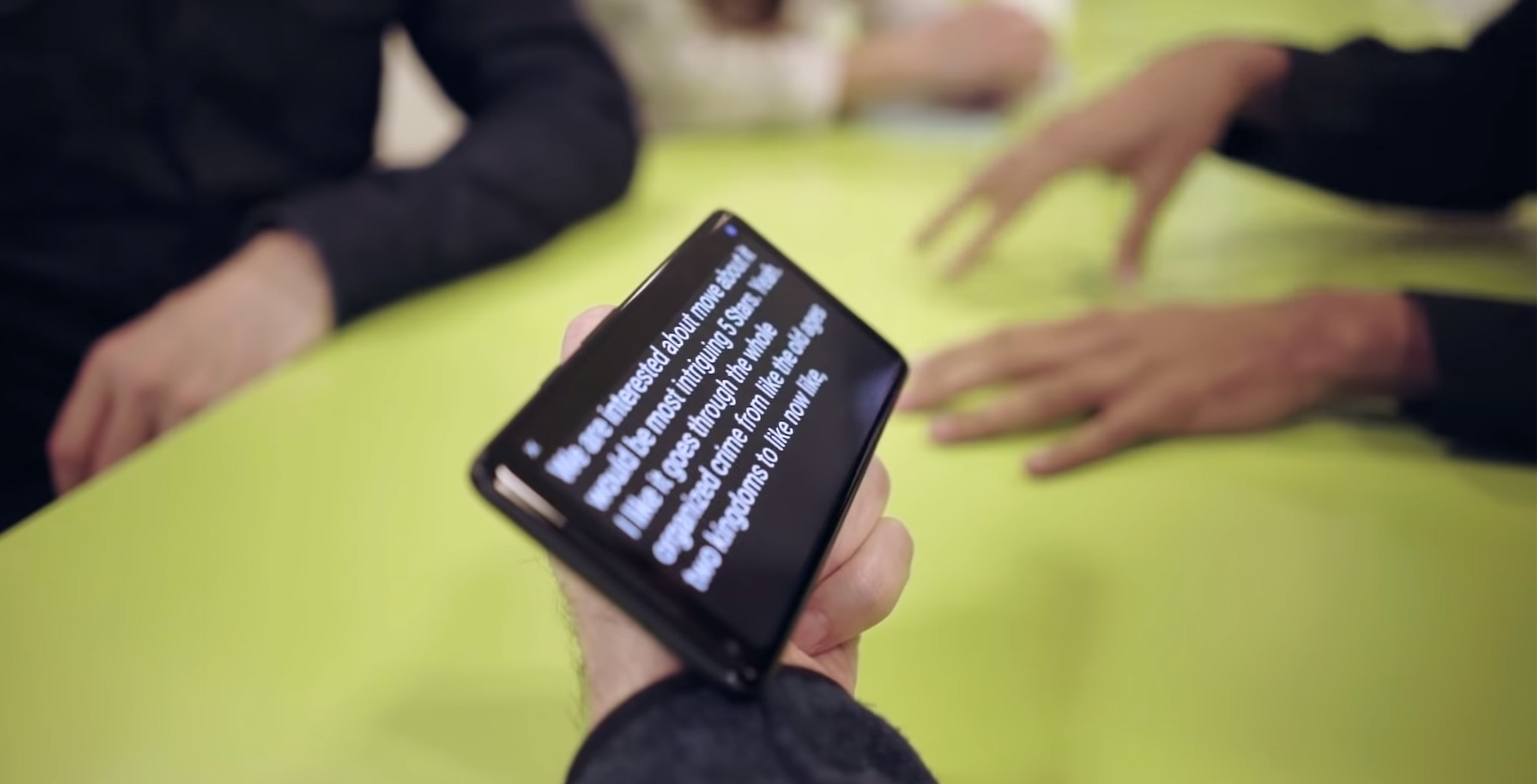

Google is expanding Android’s Live Transcribe feature with two new updates that aim to help people who are deaf or have trouble hearing.

The first feature will show users ‘sound events’ in addition to speech. For instance, the app will tell the user that a dog is barking or that someone is knocking on their door.

Users will have the ability to immerse themselves in their surroundings through the sound events feature, in a way that goes beyond speech, according to Google. This feature will be helpful to people who are not able to hear audio cues, such as clapping, laughter, music, applause, or the sound of a car speeding by.

Live Transcribe’s second new feature allows users to save their transcripts for three days. Google has said this feature will not only be helpful for the hearing impaired, but also for individuals who use real-time transcriptions in different ways.

For instance, users who are learning a new language can use the feature to capture sound into text. The feature could also be useful to journalists or students who are taking lecture notes.

“We’ll continue to release more features to enrich the lives of our accessibility community and the people around them,” Brian Kemler, the product manager at Android Accessibility, said in a Google blog post.

Also, amusingly, the official Android Twitter account responded to an inquiry from BBC’s Dave Lee asking if the function would be able to detect a fart as a sound event.

Yes, our ML can do it, but it’s difficult acquiring a test data set.

— Android (@Android) May 16, 2019

Google will release the two new features next month.

Source: Google

MobileSyrup may earn a commission from purchases made via our links, which helps fund the journalism we provide free on our website. These links do not influence our editorial content. Support us here.