Yesterday the internet was astorm with vitriol directed firmly at mobile darling Path, the life journal app for Android and iPhone designed to make it easy to share quickly with your closest friends.

The upshot is that, in order to facilitate the automatic and immediate ability to find people in your contact list across the budding network, that list in its entirety is uploaded to Path’s servers as a .plist file in plain text, with no apparent encryption.

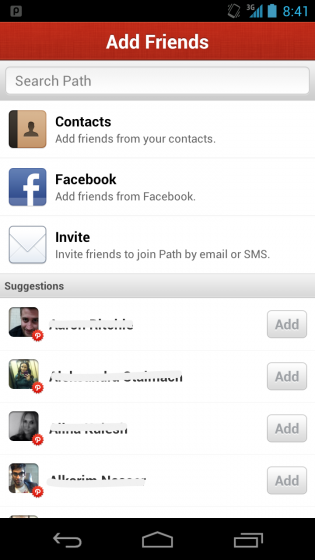

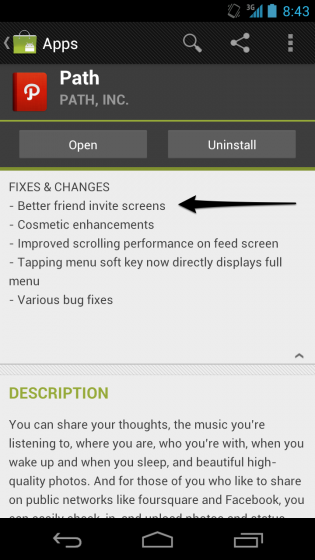

This is not the first time such scorn has been aimed at a company for surreptitiously defying not only industry convention but Apple’s policy of not transmitting sensitive user data without a user’s consent. In the latest Path update for Android, the company added an opt-in feature for friend suggestions by updating the permissions necessary to use the app. Company CEO Dave Morin claims similar changes are coming to the next iPhone update.

Naturally, users and non-users alike are furious. But such accusations have been heaped on a small app development team before. In November 2010, when Kik Messenger was proliferating like algae, it came under fire for suggesting other users across its network by uploading a local copy of a person’s contact list to its servers. But that was part of its magic: with no local input, friends just “showed up,” ready to chat. How else could this have happened if not by uploading some private information?

At the time, Kik’s CEO Ted Livingston went onto Quora to say, “As part of the Kik service, we suggest people you “may know” who are already on Kik. We do this by doing a one time scan of your address book to see if there is anyone already on Kik. Nothing is ever shared or stored (we need to update our privacy policy!)” Indeed, the subsequent changes to Kik (an opt-in for address book matching) has been met with reproachful acceptance, but the fervour has died down.

Arguably, Path is in a similar position. There is no doubt that they are not using this data maliciously; it is unlikely anyone is even looking at it. But now that the knowledge is out there, such information would be a prime target for hackers. That dire conclusion aside, the fact that Path did all this without our consent is reproachful.

Dave Morin has responded to the claim by apologizing, but also reinforcing that the service is necessary for frictionless sharing:

We actually think this is an important conversation and take this very seriously. We upload the address book to our servers in order to help the user find and connect to their friends and family on Path quickly and effeciently as well as to notify them when friends and family join Path. Nothing more. We believe that this type of friend finding & matching is important to the industry and that it is important that users clearly understand it, so we proactively rolled out an opt-in for this on our Android client a few weeks ago and are rolling out the opt-in for this in 2.0.6 of our iOS Client, pending App Store approval.

What’s absent in that statement is an explanation for why the feature was implemented in the initial release without a user’s consent. More importantly, it reinforces the practice in the industry, and begs the question: is convenience tantamount to forgoing privacy? We expose much of our life, and its sensitive data, every day on the web. Companies like Facebook and Google ostensibly provide our “anonymized” data to advertisers to better predict our buying patterns, much like telemarketers obtain our home phone numbers through questionable means.

If everyone stopped using Facebook tomorrow, or questioned Google’s integrity and logged out of their services for good, the industry would be no better, since those companies proved a method of targeted advertising that has worked. Moreover, as much as the brouhaha over Path’s indiscretions has alighted concerns over its, and similar services’ privacy, much of the population will not hear of it. If that is the case, what will we learn from this? Probably not much, except that Path can fix what’s broken and continue to use our sensitive data to make our lives more convenient.

MobileSyrup may earn a commission from purchases made via our links, which helps fund the journalism we provide free on our website. These links do not influence our editorial content. Support us here.