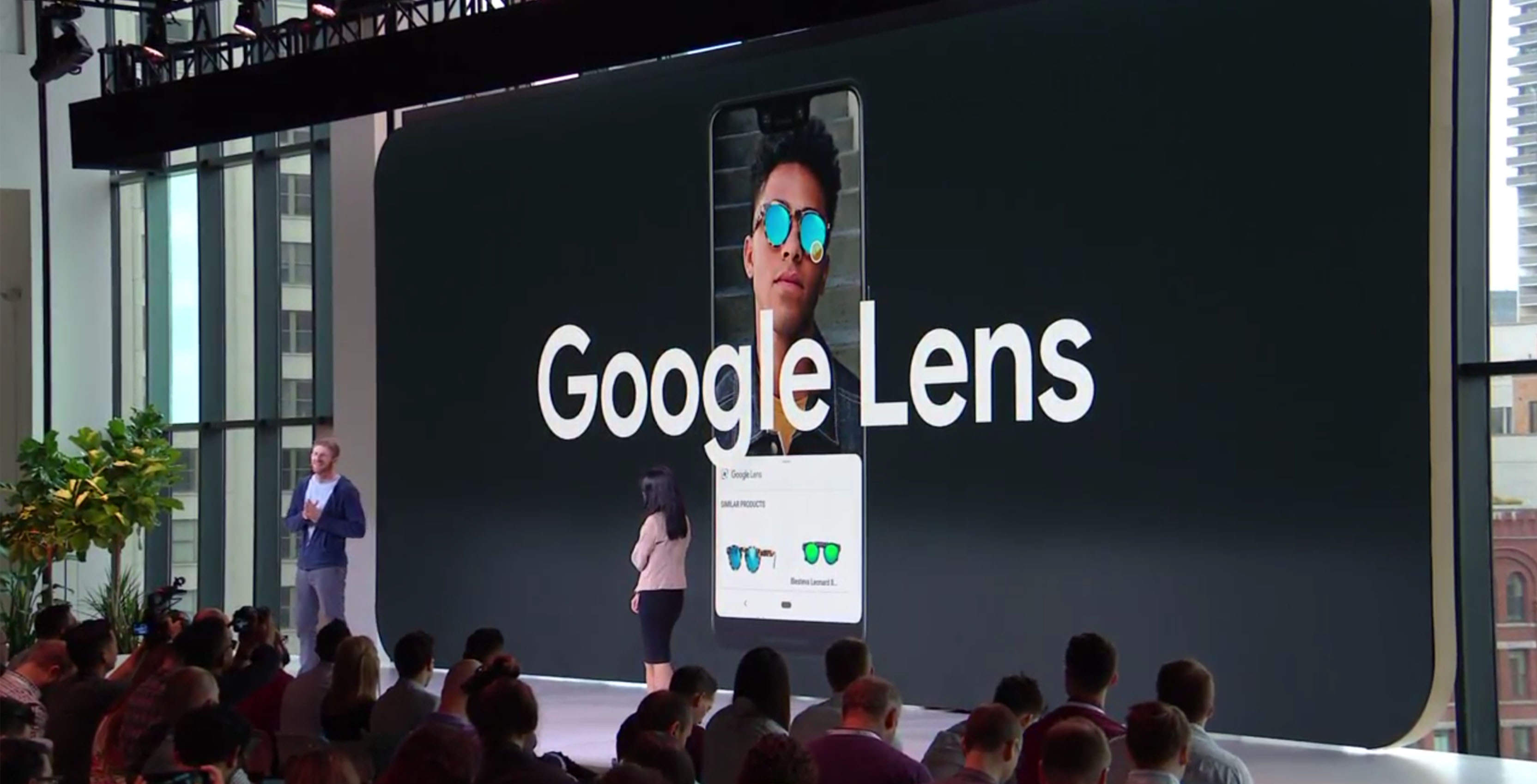

In a recent Google Keyword blog post, Aparna Chennapragada the company’s vice president of Google Lens and AR, shared what goes into making Lens work.

Chennapragada says that she sees the camera as the perfect tool for people to gather information from since it’s visual and humans are visual beings.

She then goes on to explain that Lens utilizes a combination of machine learning, Google’s Knowledge Graph and computer vision to explain what it’s pointed at.

First off, Lens needs a bit of training. It does this by leveraging Google’s vast library of online images to identify objects.

Next, Lens uses Google’s open source machine learning framework TensorFlow to identify the images that the platform has decided match what you’re pointing it at.

The last piece of the puzzle is using the information that Lens has gained to match the item with Google’s Knowledge Graph. The Knowledge Graph is what builds the cards you see at the top of Google Search results that summarize answers to questions or briefly explains something.

Lens takes information from this to give its users the same handy facts that the Knowledge Graph does for web search.

After she explains Lens, Chennapragada shares that the Lens team is working to help the product identify more items by using less than perfect photos of objects. To make this happen, Google is training Lens with photos taken from odd angles or in bad lighting, for example.

She also reveals that one of the reasons that Lens is so good at identifying text is because it uses the same spelling correction method as Google Search.

She also states that Lens can now recognize over one billion products, a number that is four times the amount that it launched with last year.

Source: Google Keyword

MobileSyrup may earn a commission from purchases made via our links, which helps fund the journalism we provide free on our website. These links do not influence our editorial content. Support us here.