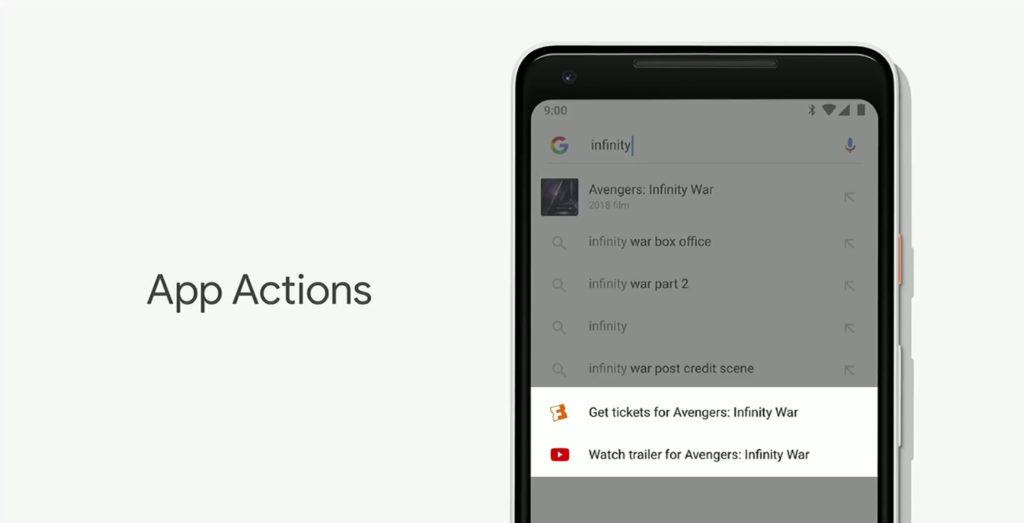

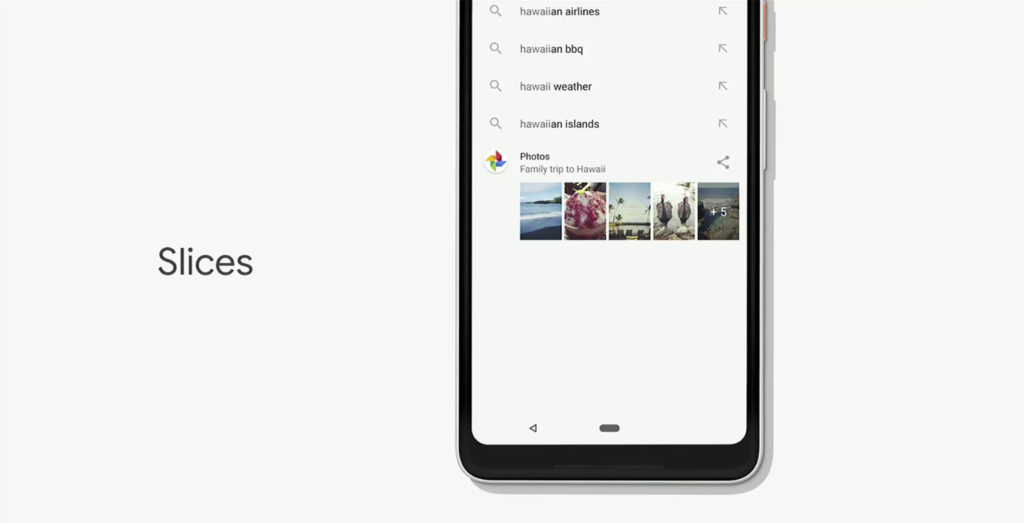

Among the new features announced for Android P at Google I/O 2018 are ‘Actions’ and ‘Slices,’ both of which work to make Android more intuitive.

Actions will allow third party apps to create little actions within the search bar, the Play Store, within Assistant and on the home screen.

Actions uses deep-learning to try and guess what you’ll need next. For example, you can plug in your headphones and you’ll see an ‘Action’ that allows you to press play on music or call someone.

Meanwhile, Slices will show a smaller part of the app’s interface, within Android P’s search bar. In Google’s example, the device can learn when you usually call a Lyft and predict that is what you’re about to do next. So it’ll show the Lyft icon and the design of the app allowing you the option to start a ride request.

These two features will be in early access starting in June.

MobileSyrup may earn a commission from purchases made via our links, which helps fund the journalism we provide free on our website. These links do not influence our editorial content. Support us here.