Google started rolling out the real-time Google Lens features it showed off at Google I/O earlier this month.

Now, users can just point their phone cameras at the world. The app proactively searches for information and places small coloured dots on recognized objects. Tapping the dots load up information about the object.

A combination of on-device machine learning and the use of Google’s new cloud TPUs to identify things quickly.

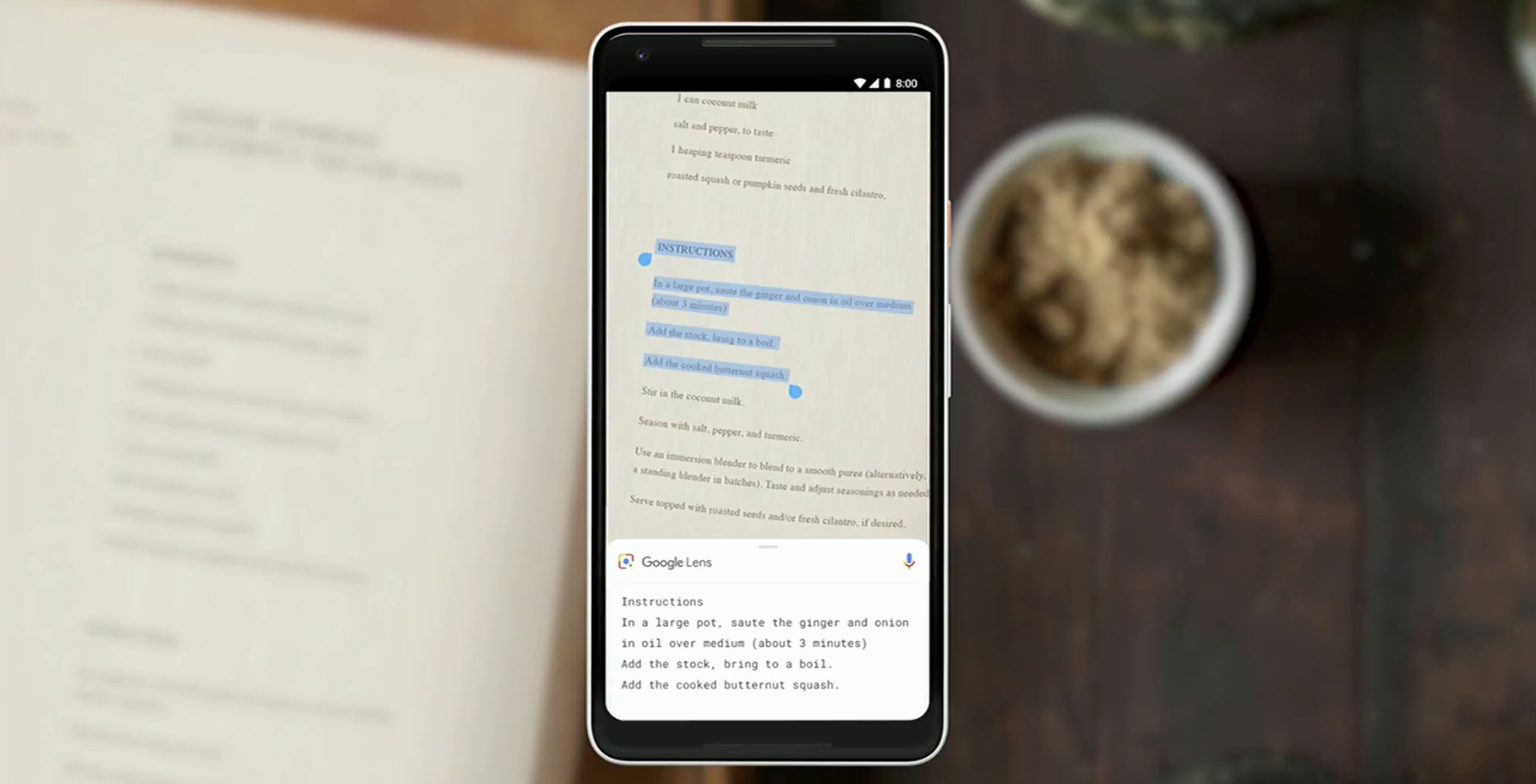

The smart text selection feature is also live. Users can now highlight text on objects in the real world as well as copy it and paste it.

Additionally, the similar objects feature is live as well. Google Lens is capable of displaying objects that look similar to ones you’ve scanned with your phone.

Along with the new features, Google Lens is sporting a new look. A round, white card now resides at the bottom of the screen. Users can drag it up to see a list of features. When you select a recognized object, the card also pops up with the information.

The redesign also moved the microphone icon to the right side of the screen. It also removed the ‘Remember this,’ ‘Important to keep’ and ‘Share’ suggestion bubbles.

Unfortunately the new features appear to be pushed out server-side. There is no app update or APK file to download that brings the new changes.

As of yet, no one at MobileSyrup has received the update. It doesn’t appear to be device specific. Some users on Reddit confirmed they received the update on the Galaxy S8 and the OnePlus 5T. However, my Pixel 2 XL running the Android P beta has not received the update.

Source: 9to5Google

MobileSyrup may earn a commission from purchases made via our links, which helps fund the journalism we provide free on our website. These links do not influence our editorial content. Support us here.