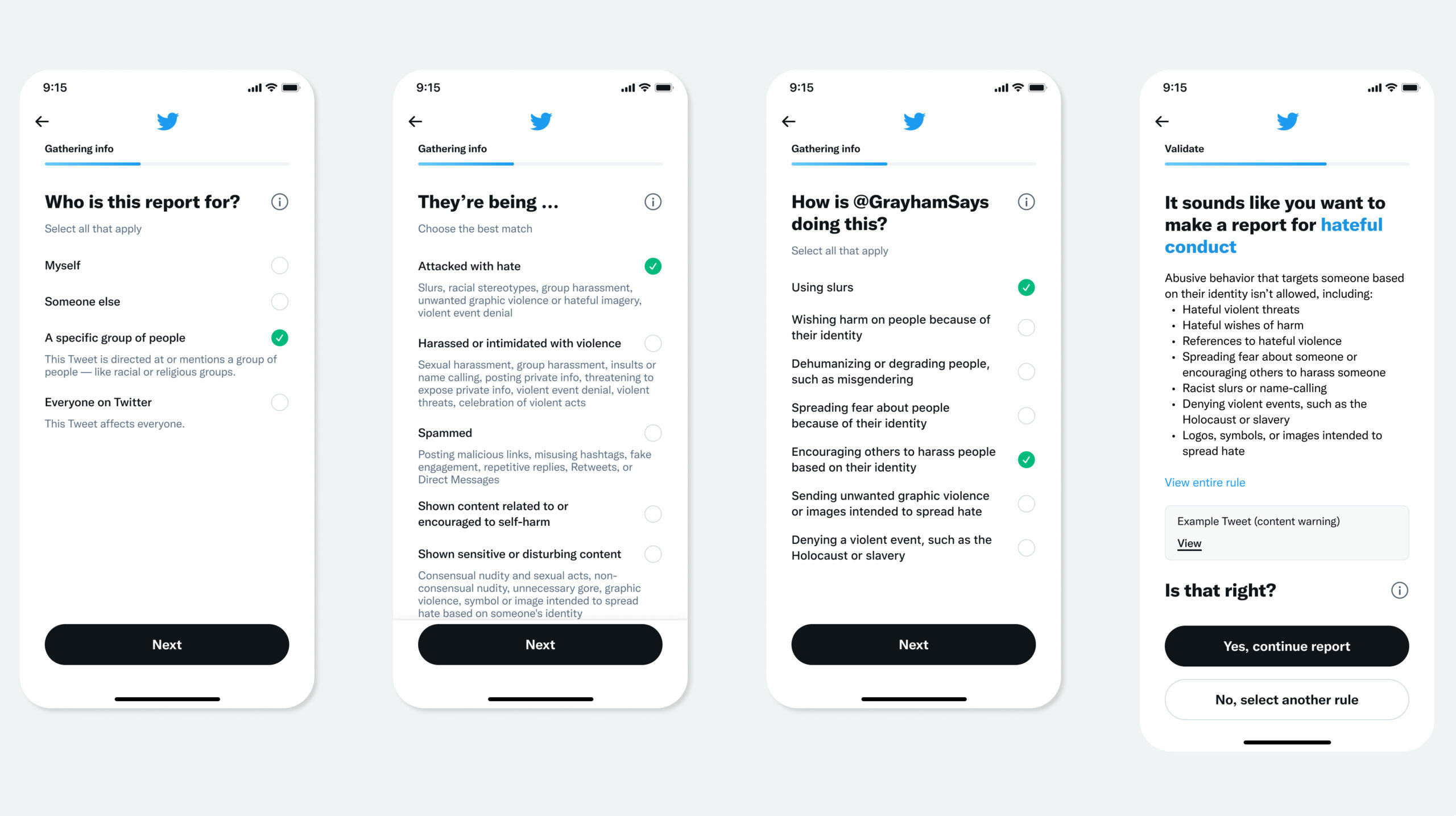

Twitter is revamping the way users report harmful Tweets/content on the microblogging platform to make it easier for people to describe what is wrong with the content.

The new reporting feature, which is currently live only for a small number of Twitter users in the U.S., is expected to be widely released in 2022.

The social media giant — which recently saw its former CEO Jack Dorsey step down — says, rather than being required to report how a tweet breaches Twitter’s rules, users will be asked whether they have been attacked with hate, harassed or threatened with violence, or shown content related to self-harm.

According to Twitter, users will also be able to explain the reason behind reporting content in their own words.

“Say you’re in the midst of an emergency medical situation. If you break your leg, the doctor doesn’t say, is your leg broken? They say, where does it hurt? The idea is, first let’s try to find out what’s happening instead of asking you to diagnose the issue,” reads Twitter’s release.

This new format of reporting content will allow Twitter to collect fine data on tweets that do not openly break its rules but that users may find harmful or unpleasant, essentially aiding the company to set out future policy updates.

“The intention of these reporting flows is to empower the customer, give Twitter actionable information that we can use to improve the product and our experiences, and also improve our trust and safety process overall,” said Twitter Health’s director of product management Fay Johnson.

Learn more about the upcoming update here.

Image credit: Twitter

Source: Twitter